Introduction

The race to give AI agents a governed understanding of enterprise data has intensified dramatically in early 2026. Snowflake shipped Semantic View Autopilot. Databricks took Unity Catalog Business Semantics to general availability and open-sourced it. Microsoft unveiled Fabric IQ Ontology with MCP endpoints. Google announced Looker BI Agents grounded in a Knowledge Catalog semantic graph. Collibra launched AI-powered Semantic Agents that auto-generate models from glossaries and metadata.

The message from every major platform vendor is now the same: AI agents cannot operate reliably on raw schemas, and the manual process of building the semantic foundation has been the bottleneck. Automate it.

That message is correct. But the way most vendors are automating it reveals a deeper architectural gap that none of them are well-positioned to close on their own, and it is exactly the gap Timbr was built to fill.

What Each Vendor Is Actually Automating

It is worth being precise about what has shipped, because the differences matter more than the marketing suggests.

Snowflake’s Semantic View Autopilot (GA, February 2026) mines query logs, Tableau workbooks, and historical SQL patterns to propose canonical metric definitions. It uses clustering algorithms to find consensus business logic — if hundreds of queries define “active user” the same way, SVA proposes that as the standard. The result is a governed semantic view that powers Cortex Analyst and Cortex Agents. This is metric-governance automation: fast, practical, and tightly scoped to the Snowflake ecosystem.

Databricks Unity Catalog Business Semantics (GA, April 2026) moves metric definitions from the BI layer to the data layer itself. Metrics, dimensions, and context metadata become first-class catalog assets, addressable via SQL and consumable by Genie, AI/BI Dashboards, notebooks, and agents. Databricks also uses AI to bootstrap semantic definitions from existing catalog objects. Agent Bricks, their governed agent platform, leverages this metadata to achieve what Databricks reports as 70% higher accuracy than standard RAG. The core implementation is being open-sourced in Apache Spark — a significant interoperability signal.

Microsoft Fabric IQ Ontology (Preview, expanded at FabCon Atlanta 2026) is the most architecturally ambitious of the platform-native offerings. It goes beyond metrics into a full ontology: business entities, relationships, properties, rules, and actions, connected to analytical, operational, time-series, and geospatial data. Ontologies can be auto-generated from existing Power BI semantic models. At FabCon, Microsoft announced that Fabric IQ Ontology will be accessible through public MCP endpoints, enabling agents beyond the Microsoft ecosystem to ground reasoning in a shared semantic layer.

Google’s Looker BI Agents (announced at Next ’26) trigger downstream business actions grounded in LookML’s semantic layer. A native managed MCP server exposes LookML definitions to external agents. Gemini-powered generation automates semantic model creation from natural language descriptions, SQL queries, and database schemas. Knowledge Catalog integration transforms Looker metadata into a semantic graph for autonomous agent operation.

Collibra’s Semantic Agents (launched late 2025, expanding in 2026) take a governance-first approach: the Semantic Model Generation Agent reads existing business glossaries, metric catalogs, and physical metadata to auto-generate business-ready semantic models. The Semantic Mapping Agent uses ML to link physical data assets to those definitions programmatically, replacing the manual curation bottleneck.

Atlan’s Context Engineering Studio bootstraps semantic models from existing dashboards, query logs, and catalog metadata, then runs an evaluation suite against real business questions before deployment. Atlan draws an explicit distinction between the “semantic layer” (metric definitions) and the “context layer” (governance, lineage, quality signals, and usage patterns wrapped around those definitions), exposing both to agents via an MCP server.

These are serious, well-resourced efforts. None of them are toys. And each addresses a real piece of the problem.

But look at what they have in common and what falls through the gaps.

Three Camps, One Structural Gap

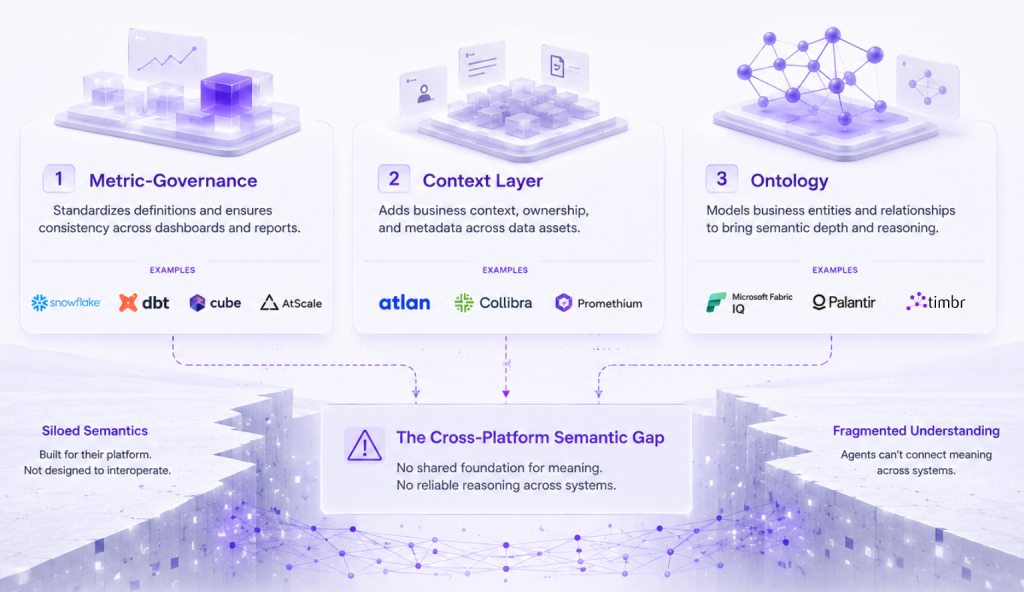

The market is splitting into three architectural camps, each automating a different layer of the semantic foundation:

The metric-governance camp – Snowflake, dbt (MetricFlow + MCP server), Cube (AI API + MCP), AtScale – focuses on ensuring every tool and agent uses the same definitions for revenue, churn, and every other business KPI. Define once, query everywhere. This is where most of the automation innovation has concentrated, and for good reason: inconsistent metric definitions are the single most common source of broken trust in analytics.

The context-layer camp – Atlan, Promethium, and to some extent Collibra – wraps governance metadata, lineage, quality signals, and usage patterns around whatever semantic definitions already exist, then exposes that enriched context to agents via MCP. The semantic layer tells you what “revenue” means. The context layer tells you who owns that definition, when it was last validated, what downstream dashboards depend on it, and whether the underlying data passed quality checks this morning.

The ontology camp – Palantir Foundry, Microsoft Fabric IQ, and Timbr – models not just metrics but the full semantic structure of the business: entities, relationships, hierarchies, rules, constraints, and actions. An ontology does not just tell an agent how to calculate revenue. It tells the agent that a Customer is a type of Account, that an Order has a relationship to a Product through a LineItem, that the relationship between a Supplier and a Factory is governed by a specific contract structure. This is the level of semantic reasoning that separates an agent that retrieves correct numbers from an agent that understands the business.

Each camp solves a real problem. But there is a structural gap that cuts across all three.

The first: almost every one of these approaches is locked to a single platform. Snowflake’s semantic views live in Snowflake. Databricks’ Business Semantics are Unity Catalog objects. Fabric IQ Ontology is a Fabric item. Palantir’s Ontology is deeply embedded in Foundry. For an enterprise with data across SAP, Snowflake, Databricks, Oracle, and PostgreSQL, no single-platform semantic layer provides a unified view.

The second: none of these automation initiatives address how agents actually retrieve and reason over the semantic foundation at query time. Defining metrics and entities is necessary. But when an agent faces a complex, relationship-driven question, it needs more than definitions. It needs a structured graph it can traverse.

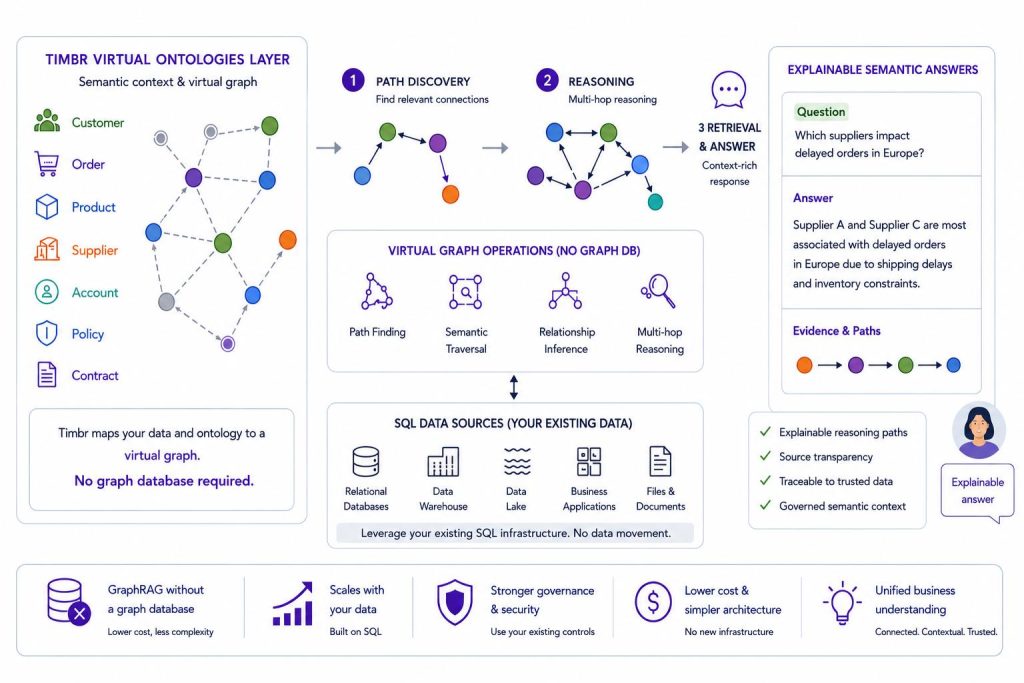

How Timbr Closes the Cross-Platform Gap

The GraphRAG Gap: From Definitions to Retrieval

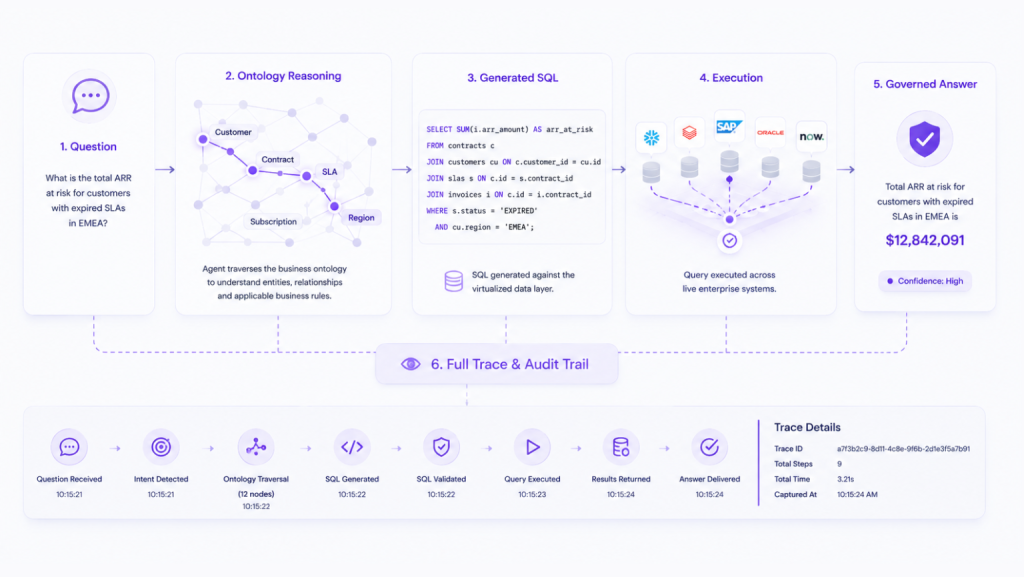

Consider a question an enterprise data agent might face: “Which suppliers serve factories that produce components used in products ordered by our top-tier customers in EMEA?”

That is not a metric lookup. It is a chain of five entity relationships. No amount of consistent metric definitions will help an agent answer it unless the agent can traverse a structured graph of those relationships at retrieval time.

This is the problem GraphRAG solves, and it is rapidly becoming the expected retrieval architecture for enterprise AI agents.

Unlike traditional Retrieval-Augmented Generation, which retrieves isolated document chunks based on vector similarity, GraphRAG leverages structured knowledge graphs to retrieve context by following explicit relationship paths between entities. The result is multi-hop reasoning: an agent moves from supplier to factory to component to product to order to customer, gathering context at each step, because the relationships are explicit and navigable rather than inferred from text similarity.

The evidence for GraphRAG’s importance is now substantial. Gartner classifies knowledge graphs as a “critical enabler” with immediate impact on generative AI. GraphRAG implementations have shown accuracy improvements of up to 3.4x over traditional RAG in enterprise scenarios. An ACM survey published this year provides the first comprehensive academic overview of GraphRAG methodologies, confirming its transition from experimental technique to production architecture.

But here is the architectural question most organizations are not asking: what serves as the knowledge graph that GraphRAG retrieves from?

The Conventional Approach, And Its Problems

The standard GraphRAG pipeline looks like this: extract entities from documents using NER, load them into a graph database like Neo4j, build retrieval over that graph, and feed the results to the LLM. This works for certain use cases, particularly unstructured document analysis, but it introduces significant problems for enterprise data agents:

Infrastructure duplication. A separate graph database means separate infrastructure to provision, maintain, and secure. Data must be extracted from source systems, transformed, and loaded into the graph store. Synchronization pipelines must keep the graph current. For organizations already managing complex data estates across multiple platforms, adding a graph database is adding complexity, not reducing it.

Quality ceiling. The knowledge graph is only as good as the entity extraction that populates it. Automated NER from unstructured documents is inherently noisy, entities are missed, relationships are misclassified, and the resulting graph reflects the accuracy of the extraction model rather than the actual structure of the business. As one recent critique of property graph models for GraphRAG highlighted, many implementations hide meaningful business concepts inside relationship properties, making them difficult for AI systems to interpret and query reliably.

Platform isolation. Even when a graph database is populated correctly, it typically covers a subset of the enterprise’s data, whatever has been extracted and loaded. It does not provide a unified graph across the full data estate.

These are not theoretical limitations. They are the practical reasons why many GraphRAG implementations stall between prototype and production.

The Alternative: The Ontology as the Knowledge Graph

There is a fundamentally different way to approach GraphRAG for enterprise data agents.

If the semantic layer is an ontology, with formally defined entity types, explicit relationships, concept hierarchies, and inherited properties, then the ontology itself is the knowledge graph. There is no need to extract entities from documents and load them into a separate graph store. The business concepts, their relationships, and the logic that governs them already exist as a structured, navigable graph.

The critical architectural distinction is this: the ontology does not need to be materialized as a physical graph. It can be virtual, a semantic graph mapped directly to the underlying relational databases where the data already lives. Relationships are defined logically in the ontology rather than physically duplicated in a graph store. The knowledge graph emerges from the relational model rather than replacing it. The graph is a semantic representation, not a new storage layer.

This is exactly what makes GraphRAG possible without a graph database. An agent querying a virtual ontology is performing GraphRAG: it retrieves context by traversing explicit relationship paths between governed business entities, and those relationship paths resolve at query time to SQL executed against the underlying data sources. The graph traversal is real. The graph infrastructure is not, because it does not need to be.

This is the architectural insight behind Timbr’s approach to GraphRAG, and it is what makes Timbr’s position in the ontology camp fundamentally different from both the platform-native ontologies (Palantir, Microsoft Fabric IQ) and the conventional graph-database-based GraphRAG stack.

We explored this argument in depth in a recent article: GraphRAG Without a Graph Database: Why SQL Ontologies May Be the Better Foundation. The core thesis: GraphRAG is a modeling problem, not a database problem. When relationships and concepts are clearly represented in a formal ontology, even a virtual one mapped to relational sources, AI systems can reason over them more effectively than they can over a physical property graph where business concepts are hidden inside edge attributes.

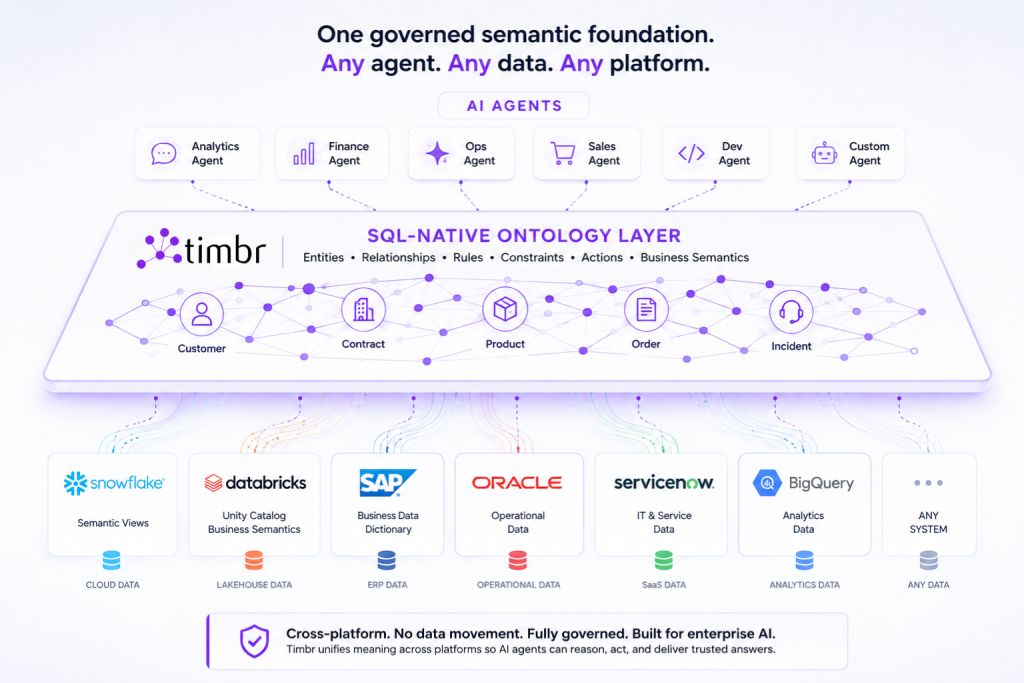

How Timbr Delivers Ontology-Powered GraphRAG Across Platforms

Timbr provides a virtual, ontology-based semantic layer that connects to data sources across platforms, Databricks, Snowflake, SAP, Oracle, PostgreSQL, SQL Server, Google BigQuery, and others, and models them into a unified graph of business concepts, relationships, hierarchies, and measures. The ontology is mapped to the underlying databases, not materialized into a separate store. A Customer is defined once. The relationship between Product and Order is defined once. Revenue is calculated once. Every agent that operates within this environment works from the same governed definitions and traverses the same virtual knowledge graph, regardless of which LLM powers it, which BI tool consumes it, or which data platform stores the underlying data.

Four capabilities make this architecturally distinct from anything the platform vendors or the conventional GraphRAG stack provide:

1. Cross-Platform Data Virtualization

Because Timbr’s ontology is virtual, it queries data where it lives. There is no data movement, no replication, no ETL into a separate semantic store or graph database. The ontology maps business concepts and relationships to the physical tables, columns, and joins across each connected source. At query time, those mappings resolve to SQL that executes on the native compute engine of each data platform, pushdown to Snowflake’s warehouse, Databricks’ clusters, SAP HANA, Oracle, or any other connected source.

The semantic layer reflects the current state of the data at all times, precisely because it is virtual rather than materialized. There is no synchronization lag between a physical graph store and the source systems. An agent can join a concept modeled from SAP data with a concept modeled from Snowflake data through the ontology’s relationship graph, without either system knowing about the other. The cross-platform join is resolved at the semantic layer through virtualization, with query execution pushed down to each source.

This is also what makes Timbr’s GraphRAG capabilities cross-platform by default. An agent performing multi-hop reasoning, traversing from a supplier entity mapped to SAP to an order entity mapped to Snowflake to a customer segment mapped to Databricks, does so through a single, unified virtual knowledge graph. The graph traversal spans platform boundaries because the ontology spans them. No other GraphRAG implementation provides graph-structured retrieval across multiple data platforms without data replication or a centralized graph database.

2. Ontological Reasoning as Graph Traversal

Timbr’s ontology defines concept hierarchies using formal class inheritance. A VIP Customer is a Customer is an Account. Properties and measures defined at any level of the hierarchy are inherited by all descendant concepts. When an agent queries “total revenue for VIP Customers,” it does not need to know the filter logic that distinguishes a VIP Customer from a regular Customer, that logic is embedded in the ontology’s concept definition and applied automatically when the virtual mapping resolves to SQL.

Relationships between concepts are first-class objects in the ontology, not implicit JOIN paths inferred from foreign keys or edge properties in a graph database. The relationship between an Order and a Product passes through a LineItem. The relationship between a Patient and a Diagnosis passes through an Encounter. These are explicit, governed definitions that the agent navigates directly, the same graph traversal that makes GraphRAG powerful, but grounded in governed business semantics rather than NER-extracted entity graphs, and resolved through virtual mappings to relational sources rather than a physical graph store.

This is also why SQL works as the query language for Timbr’s GraphRAG. Because the ontology is virtual, mapped to relational databases, every graph traversal ultimately resolves to SQL. And as we argued in the earlier article, LLMs are remarkably proficient at generating SQL, far more so than Cypher, SPARQL, or other graph query languages. When the ontology exposes relationships as SQL-queryable structures, the agent can perform graph-structured retrieval using the query language it is most reliable at generating. The semantic model makes meaning explicit. The virtual mapping makes it queryable. The SQL interface makes it accessible to any LLM.

3. LLM-Powered Auto-Mapping: Automating the Ontology Itself

The automation challenge that Snowflake addresses with Semantic View Autopilot and Collibra addresses with its Semantic Agents, eliminating the manual bottleneck of semantic model creation, is equally critical for ontology-based approaches.

Timbr uses LLM-powered auto-mapping to scan existing catalog structures, including Unity Catalog, Snowflake’s Information Schema, and other metadata sources, and automatically generate ontology definitions: concept types, properties, relationships, and the virtual mappings that connect them to the underlying data sources. What would otherwise be weeks of manual modeling becomes a starting point that domain experts refine rather than build from scratch.

The auto-mapping process works in three stages:

- Schema discovery and classification. Timbr scans the connected data sources, reads table and column metadata, and uses LLM reasoning to classify tables into candidate business concepts (e.g., a table with customer_id, name, email, and signup_date is classified as a “Customer” concept candidate) and generate the virtual mappings that connect each concept to its physical source.

- Relationship inference. Foreign key relationships, naming patterns, and structural similarities are analyzed to propose formal ontology relationships between concepts. The LLM distinguishes between structural relationships that reflect genuine business meaning and incidental schema patterns that do not. Each inferred relationship becomes a navigable edge in the virtual knowledge graph.

- Hierarchy and measure generation. Based on column types, naming conventions, and existing documentation, the auto-mapper proposes concept hierarchies (e.g., VIP Customer as a subclass of Customer) and measure definitions (e.g., identifying amount columns as candidates for revenue measures with appropriate aggregation logic).

The output is a draft virtual ontology, and therefore a draft knowledge graph ready for GraphRAG, that teams can review, adjust, and govern. Every auto-generated definition and mapping is visible, editable, and subject to the same governance controls as manually created definitions.

4. Integration with Existing Platform Semantics

Timbr does not require organizations to abandon the semantic investments they have already made. It integrates natively with Unity Catalog, exposing semantic concepts as Databricks catalogs and schemas. Governance policies, row-level security, column masking, SSO, are applied harmoniously with existing platform-level controls.

For organizations that have already built metric definitions in dbt, Snowflake Semantic Views, or Power BI models, Timbr’s virtual ontology sits above these definitions as a cross-platform unification layer. The platform-native semantics remain the source of truth within their respective ecosystems. Timbr adds the cross-platform relationships, concept hierarchies, ontological reasoning, and GraphRAG capabilities that no single platform can provide on its own, without requiring data movement, a graph database, or any change to existing infrastructure.

MCP, OSI, and the Agent Interface Layer

Two infrastructure developments are reshaping how semantic layers connect to AI agents, and both matter for evaluating any vendor’s approach.

Model Context Protocol (MCP) has become the de facto standard for how agents consume semantic context. Looker, dbt, Cube, AtScale, Atlan, and Microsoft Fabric have all announced or shipped MCP servers. Any semantic layer that does not expose its definitions through MCP is increasingly invisible to the growing ecosystem of MCP-compatible agents, including Claude, Cursor, Copilot Studio, and enterprise agent frameworks built on LangChain and similar tooling.

Open Semantic Interchange (OSI) standardizes how semantic definitions are represented and exchanged across tools, using a vendor-neutral YAML-based specification. The v1.0 spec was published in January 2026 with 50+ partners including Snowflake, Databricks, dbt Labs, Salesforce, JPMC, and AWS. OSI solves the portability problem: definitions can travel between tools. It does not solve the unification problem: making those definitions queryable, and traversable, as a single model across platforms.

For organizations evaluating their semantic architecture, the practical question is: does your semantic foundation serve definitions to agents (via MCP), ensure those definitions are portable (via OSI), provide them as a unified model across your actual data landscape (via cross-platform virtualization), and enable graph-structured retrieval so agents can reason across entity relationships (via ontology-powered GraphRAG)? Most current solutions address one or two of these requirements. Very few address all four.

Observability: Knowing What the Agent Knew

One dimension of the semantic foundation that is often overlooked in the automation conversation is observability, not just whether the agent returned a correct answer, but whether you can determine why it returned that answer and what path it traversed through the knowledge graph to reach it.

Every Timbr Data Agent interaction is logged at the execution level: the reasoning path the agent followed through the ontology, the relationships it traversed, the SQL it generated, the data it returned, the token cost at each step, and whether any retries occurred. Every conversation can be inspected. Every chain trace can be drilled into. The exact prompt constructed from the ontology context is visible and auditable.

This is not a debugging convenience. It is what makes it possible to run agents in production with confidence rather than hope. When a result is questioned, you can trace exactly where the reasoning went, which relationships the agent traversed, which concept definitions it applied, and whether it followed the intended path through the knowledge graph. When something is working well, you can understand why and replicate it across other domains.

An agent you cannot audit is an agent you cannot trust. An agent grounded in a governed, inspectable ontology, one that doubles as a knowledge graph for GraphRAG, is an agent whose reasoning you can verify end to end.

The Question Worth Asking Before You Build

The next time a vendor presents a data agent demo, or an automated semantic layer generator, the questions worth asking go beyond how impressive the automation is. They should probe what the resulting semantic foundation actually enables at query time.

Does it span the data platforms your enterprise actually uses, or only the vendor’s own ecosystem? Does it model business entities and relationships, or only metric definitions? Can agents traverse those relationships for multi-hop reasoning, or does retrieval stop at single-entity lookups? Does graph-structured retrieval require a separate graph database, or is the semantic model itself navigable? Can it be consumed by agents outside the vendor’s own tooling, through standard protocols? And can you inspect exactly what the agent knew, what path it traversed, and what it inferred, when it generated a specific answer?

The hardest problem in data agents has never been orchestration, retrieval, or natural language translation in isolation. It is giving AI systems a reliable, governed, cross-platform understanding of what enterprise data actually means, at the level of business concepts and their relationships, consistently across every platform where enterprise data lives, with graph-structured retrieval that enables agents to reason rather than guess.

The vendors automating metric governance are solving a necessary problem. The vendors building ontologies are solving a deeper one. Timbr solves both, and delivers GraphRAG across the platforms where enterprise data actually lives, without requiring a graph database.

See It in Practice

Explore how Timbr’s ontology-based semantic layer gives data agents a governed understanding of your business across Databricks, Snowflake, SAP, and beyond.