Thanks to Rajesh Iyer, Global Head of AI & ML for Banking, Capital Markets, P&C, Life & Health Insurance at Capgemini for sharing the material used in this post.

Introduction

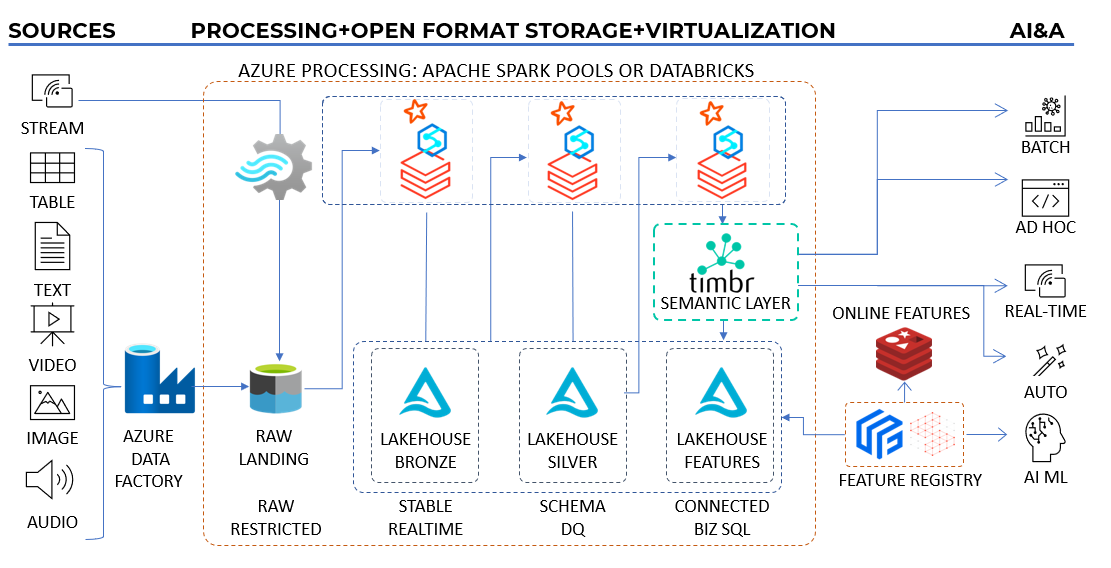

Timbr combines with Databricks over Lakehouse power the Insights First Architecture, which aims to enable enterprise data consumption patterns that facilitate efficient insight delivery and potential acceleration.

This document explores the concept of Insight First Architecture and its significance in driving effective business solutioning with the aid of Timbr.

Challenges of Legacy Architectures

Legacy data architectures often struggle to accommodate the diverse and evolving enterprise data consumption patterns. This challenge becomes more pronounced as unstructured data takes center stage in generating valuable insights for enterprises.

The Insights First Architecture is designed to address the problems associated with legacy architectures:

1. Intermodal Container

Implementing a standard and flexible data format ensures compatibility across different systems, while adopting an open format to promote data ownership and portability.

2. Distribution Modality

Providing cost-benefit rules and the flexibility to switch between different data distribution approaches, optimizing resource allocation.

3. Invisible Intelligence

Treating JOINS as relationships and developing a deep understanding of the firm’s structure to enable incorporation of intelligent data linking and inference capabilities.

4. Language Barrier

Simplifying business concepts and offering a straightforward way to query and interact with the data to enable effective communication between business users and the architecture.

5. Make It Easy to Shop

Treating data as a product and providing a flexible platform for accessing and utilizing data to simplify the process of data discovery and acquisition.

6. Overcome Analysis Paralysis

Recognizing that more data doesn’t always lead to better insights, the architecture promotes starting with a focused and targeted view of data, allowing for a more meaningful analysis.

Insight First Architecture

The term “Insight First Architecture” was coined by Raghu Chandra, Capgemini Financial Services Insights & Data Cloud Leader. It emphasizes the importance of prioritizing business needs and understanding the value of insights over the sheer quantity of data.

Instead of letting data science and technology drive solutioning, the focus should shift to business individuals taking an active interest in understanding the what and how of technology and data science.

In simpler terms, the goal is to work with realistic and actionable data and continuously expand its scope, rather than striving to acquire all available data. The primary emphasis lies in defining, measuring, and maximizing the value of insights.

The Last Mile: Data to Dollars

Insight First Architecture aims to enable enterprise data consumption patterns that facilitate efficient insight delivery and potential acceleration.

To do so, the architecture is designed as an Internal Developer Platform, facilitating the transfer of data from insight-driven initiatives to the experimentation backlog generated by the Use Case factory.

By streamlining this process, the architecture enhances the organization’s ability to extract value from data, enabling effective decision-making and driving business success.

Several key components contribute to achieving this goal:

1. Modern Data Architecture

By leveraging state-of-the-art (SOTA) data architectures and SITA compute architectures, the Insights First Architecture synergizes the capabilities of Databricks Lakehouse architecture. This approach ensures a coherent and purpose-built foundation for accommodating various data consumption patterns.

2. Semantic Graph Capabilities

The integration of Timbr semantic graph and PySpark enhance the architecture’s data consumption capabilities for business users and data scientists. Leveraging graph machine learning (Graph ML), these capabilities enable the extraction of valuable insights from the interconnectedness of data.

3. Gen AI Enablement

The Insights First Architecture enables the utilization of AI and ML within PySpark pipelines, specifically when processing unstructured data. This incorporation of AI and ML throughout the data pipeline shifts the focus from the traditional “data, data, data, and finally ML” approach to a more intertwined relationship between data and AI/ML.

Unlock the power of Insight First Architecture

Conclusion

Insights First Architecture, implements Azure’s Data Factory, Azure Processing with Databricks and Timbr semantic layer to deliver a comprehensive approach to data consumption, offering a transformative solution for businesses.

By prioritizing insights, leveraging modern data architectures, and addressing legacy challenges, enterprises can unlock the full potential of their data assets, leading to improved decision-making and tangible business outcomes.

How do you make your data smart?

Timbr virtually transforms existing databases into semantic SQL knowledge graphs with inference and graph capabilities, so data consumers can deliver fast answers and unique insights with minimum effort.